Dear Futurists,

1.) International Technoprogressive Conference, Tues 22 Feb

Friends of London Futurists in l’Association Françoise Transhumanistes (Technoprog) are hosting an international online technoprogressive conference on Tuesday next week – 22nd February. (Sorry for the short notice!)

The headline themes of the conference are “To be human, today and tomorrow” and “Converging visions from many horizons”.

The event will start at 4pm GMT (5pm Central Europe Time) and is scheduled to run for up to four hours.

The declared goal of the conference is to highlight and explore “the diversity, the convergences, and the growth” of people around the world who are happy to describe themselves as friends of transhumanism.

At the last count, over a dozen people are preparing to give short presentations to the event. To encourage focus, each presentation slot is restricted to four minutes. We’ll all need to be on our toes!

(Some speakers are recording videos of the presentations in advance – especially if the timeslot of the conference is awkward for them to participate live.)

In their presentations, speakers are asked to provide a brief summary of transhumanist-related activity in which they are involved, and then to make a proposal about “a concrete idea that could inspire positive and future-oriented people or organisations”. These proposals could address, for example, AI, enhancing human nature, equity and justice, accelerating science, existential risks, the Singularity, social and political angles, the governance of technology, superlongevity, superhappiness, or sustainable superabundance.

Speakers are also asked, ahead of the conference, to write down their proposal in 200 words or less, for distribution in a document that will be shared among all attendees.

Once all presentations have been made, attendees will have a chance to provide feedback on what they have heard – especially when they wish to support particular projects and to become involved in them.

If you want to register to attend, please click this Zoom registration link.

In addition, if you would like to join the set of people making presentations at this event, please let me know as soon as possible, mentioning the core idea of the proposal you would advance.

Image credit: the above graphic includes work by Pixabay user Sasin Tipchai.

2.) Last few days to respond to the survey on rise of AGI

The number of people who have supplied answers to the questionnaire “The Rise and Implications of Artificial General Intelligence (AGI)” has now reached 112.

Many thanks to everyone who has already taken part! The responses so far have been fascinating.

This survey will be closed on 22nd February, so if you are considering supplying answers, please don’t delay.

As a reminder, this text is from the description of the survey:

The scope of AGI is different from the kinds of more narrowly focused AI systems in use today – which we will refer to as Artificial Narrow Intelligence (ANI).

Existing ANI systems typically have powerful capabilities in specific narrow contexts, such as processing mortgage and loan applications, route-planning, predicting properties of molecules, playing various games of skill, buying and selling shares, recognising images, and translating speech.

But in all these cases, the ANIs involved have incomplete knowledge of the full complexity of how humans interact in the real world. The ANI can fail when the real world introduces factors, situations, and unusual combinations of both that were not part of the data set of examples with which the ANI was trained. In contrast, humans in the same circumstance would be able to rely on capacities such as “common sense”, “general knowledge”, and intuition or “gut feel” to reach a better decision.

Hence, the difference from existing AIs is that a true AGI would have as much common sense, general knowledge, and intuition as any human. An AGI would be at least as good as humans at reacting to unexpected developments. So, an AGI would be able to pursue pre-specified goals as competently as (but much more efficiently than) a human, even in the kind of complex environments which would cause today’s ANIs to stumble.

Organisations will in principle be able to use AGI “without humans in the loop” to achieve faster results in more complex circumstances. Healthcare interventions could become far more timely and personalised. Businesses could adjust their product plans more quickly. Military units could achieve decisive advantage in conflict situations. Automated social media botnets could transform the public discussion before anyone has become aware of deliberately fabricated and misleading content. And so on.

How might the adoption of AGI impact economies, societies, and critical services such as education and healthcare? Which potential consequences of AGI deserve the most attention from government, business, and society? Which opportunities are the most exciting? Which risks are the most daunting? How should different parts of society prepare for the rise of AGI? And what scope is there to steer the way AGI is designed, configured, applied, and governed? This survey is designed to explore these issues. We look forward to seeing your views.

Image credit: the above graphic includes work by Pixabay user Geralt.

3.) Options for controlling artificial superintelligence

What are the best options for controlling artificial superintelligence?

Should we confine it in some kind of box (or simulation), to prevent it from roaming freely over the Internet?

Should we hard-wire into its programming a deep respect for humanity?

Should we avoid it from having any sense of agency or ambition?

Should we ensure that, before it takes any action, it always double-checks its plans with human overseers?

Should we create dedicated “narrow” intelligence monitoring systems, to keep a vigilant eye on it?

Should we build in a self-destruct mechanism, just in case it stops responding to human requests?

Should we insist that it shares its greater intelligence with its human overseers (in effect turning them into cyborgs), to avoid humanity being left behind?

More drastically, should we simply prevent any such systems from coming into existence, by forbidding any research that could lead to artificial superintelligence?

Alternatively, should we give up on any attempt at control, and trust that the superintelligence will be thoughtful enough to always “do the right thing”?

Or is there a better solution?

If you have clear views on this question, I’d like to hear from you.

I’m looking for speakers for a forthcoming London Futurists online webinar dedicated to this topic.

I envision three or four speakers each taking up to 15 minutes to set out their proposals. Once all the proposals are on the table, the real discussion will begin – with the speakers interacting with each other, and responding to questions raised by the live audience.

The date for this event remains to be determined. I will find a date that is suitable for the speakers who have the most interesting ideas to present.

Thanks to the people who have already expressed an interest in taking part. I’m still looking for one or two additional speakers who can provide extra diversity to the discussion.

As I said, please get in touch if you have questions or suggestions about this event.

Image credit: the above graphic includes work by Pixabay user Geralt.

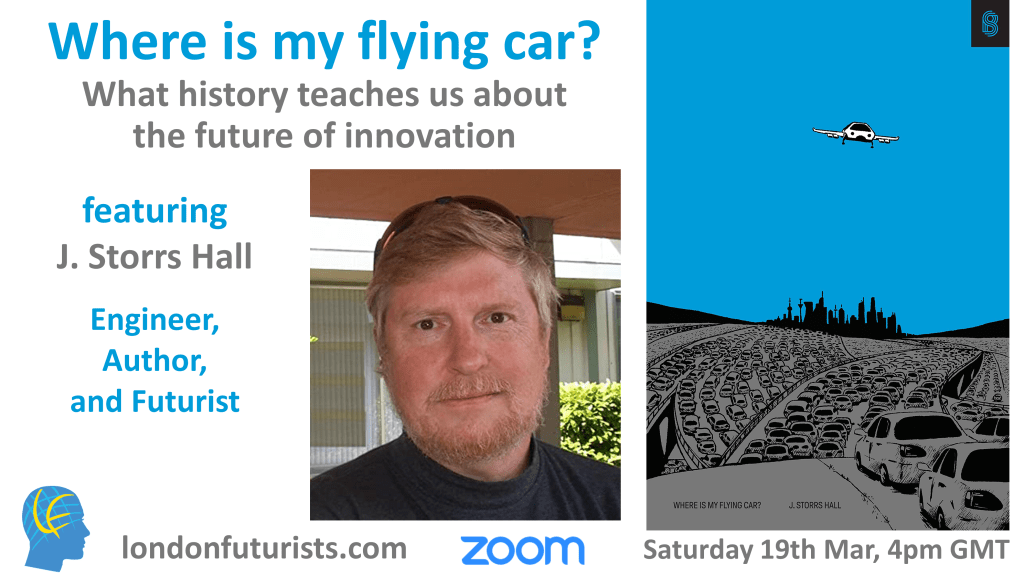

4.) Other forthcoming London Futurists events

I’ll close this newsletter with brief reminders about two other forthcoming London Futurists events.

Click on the images to find more details in each case.

And look out for more announcements coming soon…

// David W. Wood

Chair, London Futurists