Dear Futurists,

What emotions come to your mind when you think about the potential rise of Artificial General Intelligence (AGI)?

Are you filled with foreboding, or with excitement?

Or maybe the idea strikes you as boring – because you think AGI is infeasible, or is possible only in some far-distant future.

But whatever your reaction, I encourage you to read on. This entire newsletter is devoted to the question of how to assess the rise and implications of AGI.

1.) Community survey – your invitation to take part

In collaboration with Fast Future and with the UK node of the Millennium Project, London Futurists invites participation in a new survey on “The Rise and Implications of AGI”

The survey describes itself as follows:

This survey seeks to collect feedback on the rise and implications of artificial general intelligence (AGI) from the broadest possible global community of artificial intelligence specialists, business leaders, policy makers, advisers and analysts, educators, technologists, economists, futurists, and social activists.

The survey is part of a longer process in which the results from one phase of inquiry will be fed back to the community to stimulate further dialogue in subsequent phases. Each headline question in the survey offers multiple choice options as possible answers, and is followed by a text box where respondents can comment on the question and their answers…

How might the adoption of AGI impact economies, societies, and critical services such as education and healthcare? Which potential consequences of AGI deserve the most attention from government, business, and society? Which opportunities are the most exciting? Which risks are the most daunting? How should different parts of society prepare for the rise of AGI? And what scope is there to steer the way AGI is designed, configured, applied, and governed? This survey is designed to explore these issues.

The survey also explains the difference between today’s AI – sometimes called Artificial Narrow Intelligence (ANI) – and the envisioned future AGI.

Depending on how long you think about some of the answers, the survey should take between 10 and 30 minutes to complete.

All questions are optional, so you can skip the ones where you prefer not to answer.

I look forward to sharing the survey findings in future newsletters.

To access the survey, click here.

Image credit: Pixabay user Geralt.

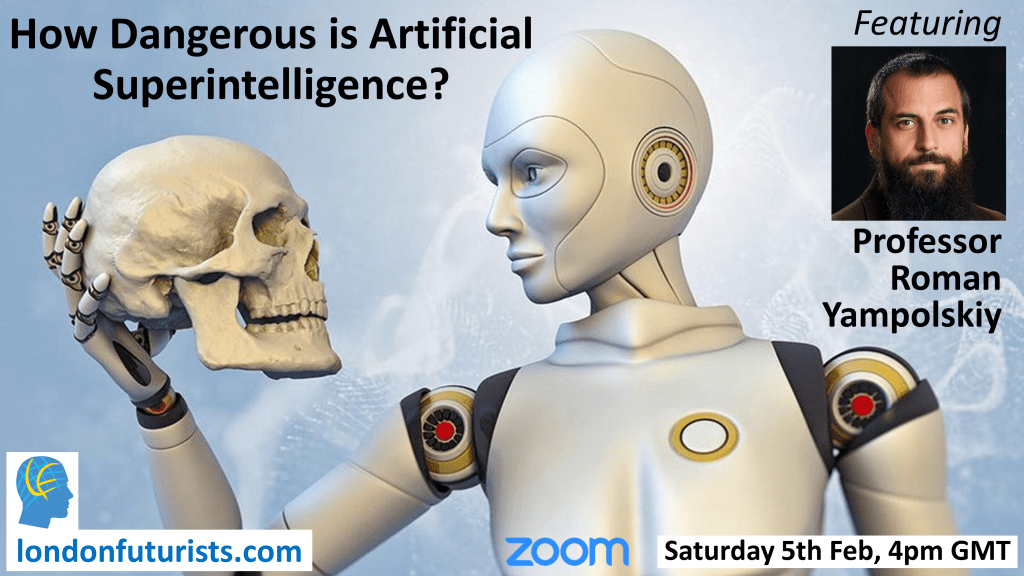

2.) How Dangerous is Artificial Superintelligence?

If you take the time to answer the above survey, you’ll likely find you appreciate more fully some aspects of the London Futurists webinar happening on Saturday 5th February.

The headline title for the webinar is “How Dangerous is Artificial Superintelligence?”

Here’s how the meeting is described:

Researchers around the world are developing methods to enhance the safety, security, explainability, and beneficiality of “narrow” artificial intelligence. How well will these methods transfer into the forthcoming field of artificial superintelligence (ASI)? Or will ASI pose radically new challenges that will require a different set of solutions? Indeed, what hope is there for human designers to keep control of ASI when it emerges? Or is the subordination of humans to this new force inevitable?

These are some of the questions to be addressed in this London Futurists webinar by Roman Yampolskiy, Professor of Computer Science at the University of Louisville. Dr. Yampolskiy is the author of the book “Artificial Superintelligence: A Futuristic Approach” and the editor of the book “Artificial Intelligence Safety and Security”. Dr. Yampolskiy will also be answering audience questions about his projects and research.

Click here for more details about this webinar and to register to attend.

3.) Different routes to superintelligence

I’ve recently published another video for the “Singularity” section of the Vital Syllabus.

The video discusses different possible routes for the emergence of superintelligence, and refers to a ground-breaking article that Vernor Vinge wrote in 1993, “What is the Singularity?”.

Vinge’s article listed four ways in which superintelligence might arise:

- Individually powerful superintelligent computers

- Networks of relatively simple computers, in which a distributed superintelligence emerges from the interaction of these computers

- Humans becoming superintelligent as a result of highly efficient human-computer interfaces

- Human brains (or other biological systems) being significantly improved as a result of biotechnological innovation.

The new video addresses three key questions arising:

- Which of these four routes is the most likely to reach superintelligence first, given a sufficient amount of funding and support?

- Are there qualitative differences in the types of superintelligence that are likely to result from these four routes – for example, are some types more likely to treat humans with full benevolence, or to be easier to control?

- How will each of these four routes interplay with the latent superintelligence that is already present in hard-to-control business corporations?

The video doesn’t claim to provide full answers, but, as you can see for yourselves, it raises a number of points for further consideration:

So although this is the end of this newsletter, it isn’t the end of the analysis of the rise and impact of superintelligence. Look out for more answers – and, probably, new questions too – in the weeks and months ahead.

// David W. Wood

Chair, London Futurists