Dear Futurists,

Delusions, charlatans, and incantations – these were three words used by the guest on the latest episode of London Futurists Podcast, as a description of important aspects of human nature.

The guest went on to emphasise more positive aspects of humanity: how science can, sometimes, help protect us from delusions, and the special role of what he called “reciprocal accountability”.

This tension – between the better aspects of human nature, and some of its worse aspects – is made all the more complicated by the greater capabilities provided by fast-changing technologies. Possibilities for delusion are greater than ever before.

It’s in this context that London Futurists seeks to boost reciprocal insight, reciprocal awareness, and, yes, reciprocal accountability.

For some details, read on.

In case you’re wondering, the above image is based on a design that DALL.E 2 generated from a prompt including the words “delusions, charlatans, incantations”.

1.) Presenting gedanken experiments

The guest on the podcast episode I’ve just mentioned was the scientist and multi-award winning science fiction author Davin Brin. Alongside his fiction, Brin is also known as the author of the non-fiction book The Transparent Society: Will Technology Force Us to Choose Between Privacy and Freedom?

The Transparent Society was first published in 1998. With each passing year it seems that the questions and solutions raised in that book are becoming ever more pressing. One aspect of this has been called Brin’s Corollary to Moore’s Law: Every year, the cameras will get smaller, cheaper, more numerous and more mobile.

Brin also frequently writes online about topics such as space exploration, attempts to contact aliens, homeland security, the influence of science fiction on society and culture, the future of democracy, and much more besides.

As you might expect, the conversation on the podcast episode covered many highly relevant topics – not least the importance of thinkers such as Brin in “presenting gedanken experiments”.

You can find that episode here, or on any of the standard podcast platforms. For your convenience, I’m including as an appendix to this newsletter a listing of some of the topics covered in that episode.

2.) Reciprocal accountability: regulating AI

Our human desires to look after each other – to prevent each other coming to harm – are particularly challenged by the rise of ever-more powerful AI.

On the one hand, we want to prevent each other from falling foul of manipulation, bias, distortions, and other abuses that might arise from AI algorithms that are misconfigured, bug-laden, or poorly trained. On the other hand, we recognise that powerful AI has the potential to enable better education, more comprehensive healthcare, more affordable housing, widespread cleaner energy, and lower cost production of nutritious food (among many other consumer goods and services).

How do we strike the best balance between protecting against possible catastrophic harms whilst encouraging profound innovative benefits to humanity?

Law-makers within the EU have proposed an EU AI Act. It’s an ambitious piece of legislation. However, various observers have expressed concerns.

These observers concede that the Act is well intentioned. However, they’re worried about unintended consequences that may arise. Some of these consequences could be profoundly bad.

You can read some analyses on this page, which is maintained by the Future of Life Institute.

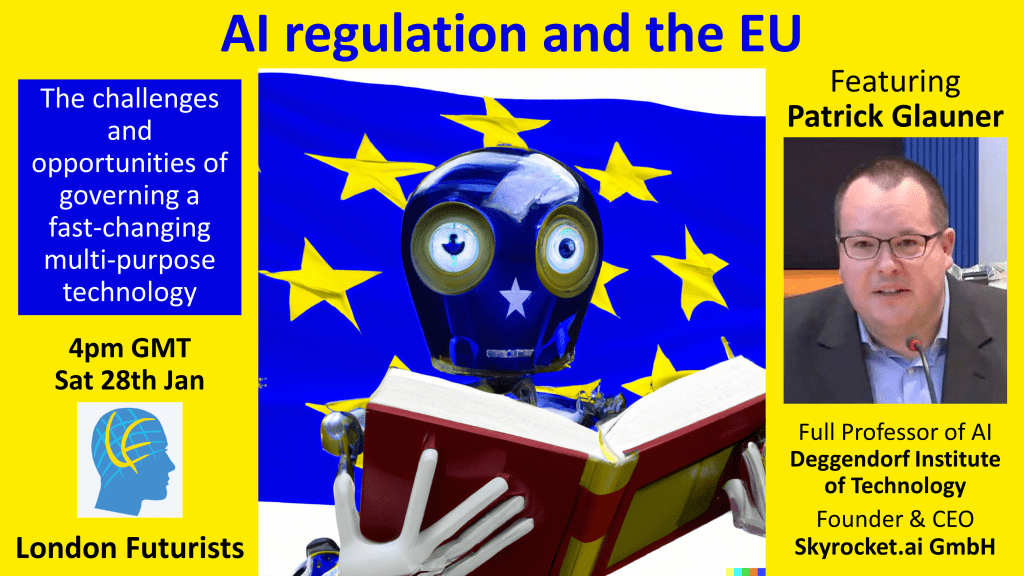

Our live webinar tomorrow features one of these critics, Professor Patrick Glauner from Deggendorf Institute of Technology (Germany). Glauner has made a thorough study of the issues that may arise from the EU AI Act, and he has his own recommendations for different approaches.

Click here for more details of tomorrow’s event and to register to attend.

3.) How misinformation spreads

Why are so many people so wrapped up in what the rest of us consider to be delusions?

Are they just … delusional?

It turns out that the answer is more complicated. We humans adopt beliefs, and commit ourselves to beliefs, not just for intellectual reasons or psychological reasons. Another set of factors is at work: social dynamics.

These are factors which, counter-intuitively, make it rational for each of us to discount the apparent counter-evidence of our own experiences, and to “go with the flow” of what seems to be a well-established group opinion.

Given that explanation, it follows that if we want to prevent each other from falling foul of dangerous delusions, we need to address social factors as well as philosophical and psychological ones.

Part of this solution echoes the messages given by David Brin in the podcast episode mentioned earlier: improvements to the conduct and practice of science.

That’s the analysis we’ll be hearing in eight days time, on Saturday 4th February, from Cailin O’Connor, who is a Professor in the Department of Logic and Philosophy of Science, and a member of the Institute for Mathematical Behavioral Science at UC Irvine.

Click here for more details of this live webinar and to register to attend.

4.) Podcast review

While browsing Apple Podcasts this morning I was pleasantly surprised to notice the following review:

Fantastic podcast! Do yourself a favour and subscribe immediately!

If you are in anyway excited by new technology that could significantly impact our future and potentially extend and improve our lives in ways that are hard to fully fathom, you should find this a highly intelligent and thought provoking podcast, with each episode being like a workout for your brain, but one that leaves it feeling refreshed rather than tired.

As for the two well spoken co-hosts and their carefully selected interesting guests, they are certainly qualified to be here, and they manage to make their point without feeling the need to shout, which means that by the time you finish listening to an episode, you feel smarter, but not stressed out; so you could listen to this podcast even before you go to bed.

Very highly recommended!

What can I say? It’s a heart-warming commendation for the London Futurists Podcast.

The review was submitted by a podcast subscriber with the username “TheGadgetGuru”. So far as I know, he/she isn’t a relative of mine!

I might wonder whether they’re a relative of podcast co-host Calum Chace…

But to be serious again: reviews like this help to spread the word. Consider it part of reciprocal accountability. We all end up wiser if we take the time to highlight, for others to see, our own recommendations of material we believe to deserve more attention.

So, I encourage more of the listeners to the podcast to write a short review too.

You can start by the even simpler step of giving us a five-star rating. Assuming, that is, that you enjoy the episodes.

In case you liked some episodes significantly less than others, please let me know. I don’t want to end up deluding myself as to the quality of the content I’m producing!

5.) Appendix: podcast content highlights

As promised above, here for your convenience is a listing of some of the topics covered in Episode 23 of our podcast, featuring David Brin:

- Reactions to reports of flying saucers

- Why photographs of UFOs remain blurry

- Similarities between reports of UFOs and, in prior times, reports of elves

- Replicating UFO phenomena with cat lasers

- Changes in attitudes by senior members of the US military

- Appraisals of the Mars Rovers

- Pros and cons of additional human visits to the moon

- Why alien probes might be monitoring this solar system from the asteroid belt

- Investigations of “moonlets” in Earth orbit

- Looking for pi in the sky

- Reasons why life might be widespread in the galaxy – but why life intelligent enough to launch spacecraft may be rare

- Varieties of animal intelligence: How special are humans?

- Humans vs. Neanderthals: rounds one and two

- The challenges of writing about a world that includes superintelligence

- Kurzweil-style hybridisation and Mormon theology

- Who should we admire most: lone heroes or citizens?

- Benefits of reciprocal accountability and mutual monitoring (sousveillance)

- Human nature: Delusions, charlatans, and incantations

- The great catechism of science

- Two levels at which the ideas of a transparent society can operate

- “Asimov’s Laws of Robotics won’t work”

- How AIs might be kept in check by other AIs

- The importance of presenting gedanken experiments.

In the same way, this listing of content may whet your appetite to check out Episode 22, which featured one of IBM’s most senior executives, Alessandro Curioni:

- Some background: 70 years of inventing the future of computing

- The role of grand challenges to test and advance the world of AI

- Two major changes in AI: from rules-based to trained, and from training using annotated data to self-supervised training using non-annotated data

- Factors which have allowed self-supervised training to build large useful models, as opposed to an unstable cascade of mistaken assumptions

- Foundation models that extend beyond text to other types of structured data, including software code, the reactions of organic chemistry, and data streams generated from industrial processes

- Moving from relatively shallow general foundation models to models that can hold deep knowledge about particular subjects

- Identification and removal of bias in foundation models

- Two methods to create models tailored to the needs of particular enterprises

- The modification by RLHF (Reinforcement Learning from Human Feedback) of models created by self-supervised learning

- Examples of new business opportunities enabled by foundation models

- Three “neuromorphic” methods to significantly improve the energy efficiency of AI systems: chips with varying precision, memory and computation co-located, and spiking neural networks

- The vulnerability of existing confidential data to being decrypted in the relatively near future

- The development and adoption of quantum-safe encryption algorithms

- What a recent “quantum apocalypse” paper highlights as potential future developments

- Changing forecasts of the capabilities of quantum computing

- IBM’s attitude toward Artificial General Intelligence and the Turing Test

- IBM’s overall goals with AI, and the selection of future “IBM Grand Challenges” in support of these goals

- Augmenting the capabilities of scientists to accelerate breakthrough scientific discoveries.

To round off this review, here are some of the topics covered in Episode 21, where our guest was Ignacio Cirac, the director of the Max Planck Institute of Quantum Optics in Germany:

- A brief history of quantum computing (QC) from the 1990s to the present

- The kinds of computation where QC can out-perform classical computers

- Likely timescales for further progress in the field

- Potential quantum analogies of Moore’s Law

- Physical qubits contrasted with logical qubits

- Reasons why errors often arise with qubits – and approaches to reducing these errors

- Different approaches to the hardware platforms of QC – and which are most likely to prove successful

- Ways in which academia can compete with (and complement) large technology companies

- The significance of “quantum supremacy” or “quantum advantage”: what has been achieved already, and what might be achieved in the future

- The risks of a forthcoming “quantum computing winter”, similar to the AI winters in which funding was reduced

- Other comparisons and connections between AI and QC

- The case for keeping an open mind, and for supporting diverse approaches, regarding QC platforms

- Assessing the threats posed by Shor’s algorithm and fault-tolerant QC

- Why companies should already be considering changing the encryption systems that are intended to keep their data secure

- Advice on how companies can build and manage in-house “quantum teams”.

// David W. Wood

Chair, London Futurists